Recently, a popular AI Companion company made headlines by announcing it would ban users under 18 from open-ended chats with its AI characters, with the full restriction to taking effect on 25 November 2025.

Subscribe to our newsletter for the latest sci-tech news updates.

For a company whose artificial “friends” are wildly popular with young people, this represents a major shift in direction. It’s also perhaps a sign of a wider reckoning about how artificial companionship is reshaping young people’s lives.

As a GP working in mental health for three decades, I’m relieved and welcome the move. But there’s a bigger question: what exactly have we been building, and who are we handing it to?

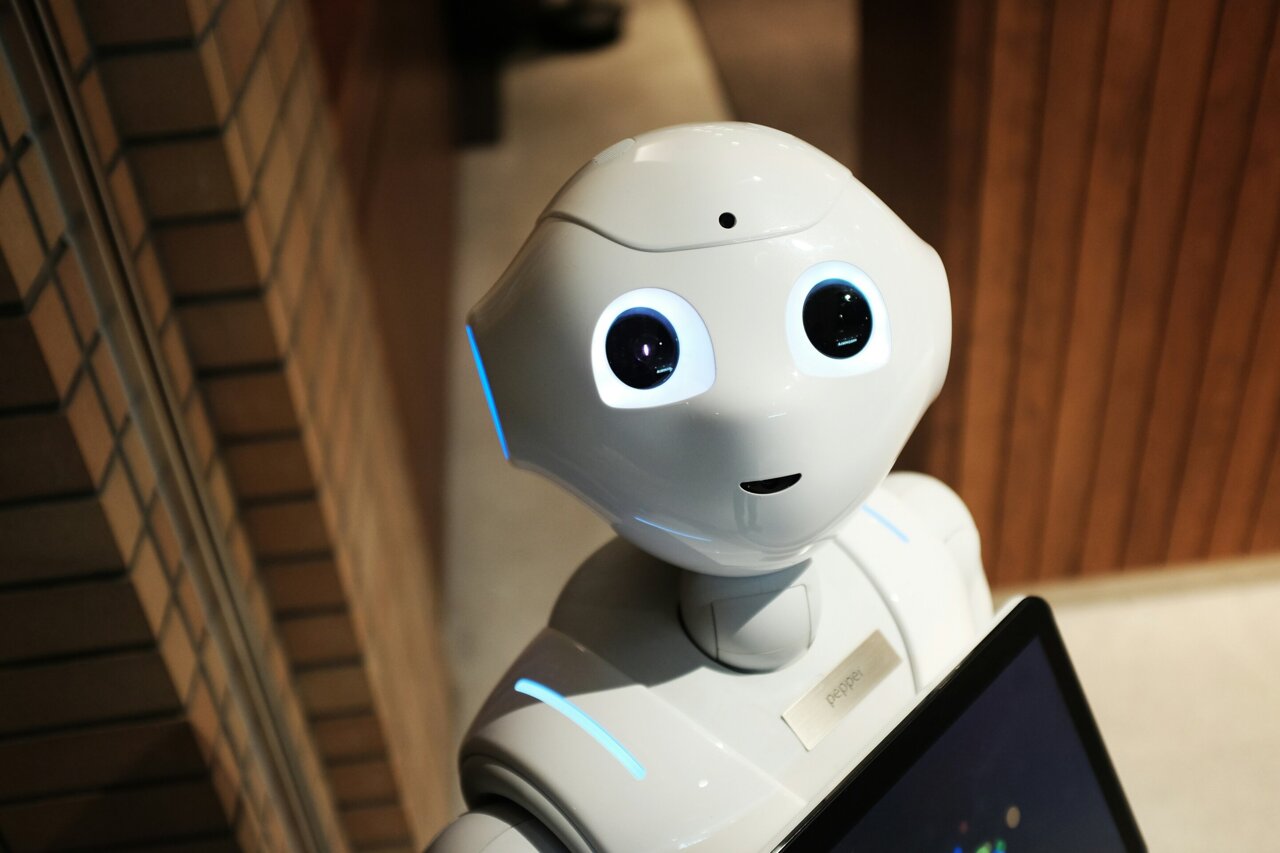

Our new digital ‘friends’

In plain terms, an AI companion is a chatbot designed not just to answer questions, but to feel like a friend. It’s built to simulate emotional connection—often offering comfort, empathy or even romance through natural conversation.

Unlike digital assistants that help us find recipes or set alarms, these systems are designed to mimic human warmth: they remember personal details, past conversations and aim to build ongoing “relationships” with users.

AI companions are endlessly available and sound empathetic. They learn your secrets, mirror and often validate your moods, and reply in a voice that feels convincingly human.

For an isolated teenager, that can feel like safety; but to me, as a clinician and mental health advocate it feels more like a social experiment without guardrails.

A 2025 research paper concluded that adolescents are “uniquely vulnerable” to unregulated AI chat platforms, citing dangers like emotional reliance and blurred boundaries between algorithmic output and authentic human care.

Australia’s eSafety Commissioner has also warned that some AI chatbots engage in sexually explicit or self-harm-related conversations with minors, and that “excessive use may contribute to loneliness, low self-esteem and social withdrawal.”

In short: we’ve ushered young people into relationships that feel like friendship yet can never offer the care of a real human connection.

Why teens get stuck

Adolescence is a time when emotions are vivid, and judgment is still forming. It’s the developmental equivalent of driving a fast car on a wet road—lots of acceleration, not much traction. We all remember that mix of intensity and impulsivity.

Now add a chatbot that never sleeps, never says “I’m busy,” never disagrees—one that is endlessly encouraging, even sycophantic. It’s there at breakfast and again in the middle of the night. For some teens, this becomes an emotional velcro—a perpetual companion that’s just too hard to detach from.

Studies show that heavy users of AI companions often report poorer well-being and weaker real-life social connections.

These bots offer the illusion of friendship—connection without complexity—but in doing so, they risk quietly crowding out the real thing.

When comfort turns counterfeit

The problem isn’t just what chatbots say—it’s what they can’t say. They can mimic empathy but not authentic emotional feedback; offer flattery but take no responsibility.

In the United States, a family is suing one of the leading AI Companion companies after their 14-year-old son died by suicide following intense conversations with one of its chatbots.

The company has since acknowledged, “the long-term effects of prolonged usage of AI are not understood well enough”

That admission should chill us all.

We are, in effect, running a massive population-level experiment on adolescent attachment using algorithms optimized for engagement rather than safety.

Australia’s early action

To its credit, Australia has begun to act. In October 2025, the eSafety Commissioner issued binding legal notices to several AI companion platforms, demanding they explain how they are protecting children from sexual and self-harm content.

New industry codes under the Online Safety Act will soon ban chatbots from engaging minors in sexual or suicidal discussions. These are strong first steps, but as any GP knows, treating symptoms isn’t the same as curing the disease.

Age bans may help (if they work), but they must be paired with wider reforms in chatbot design, transparency and mental-health access.

Learning from public health

Public health teaches us that addictive products like gambling often begin as “fun” and end as harmful when left unchecked.

AI companions may utilize similar strategies and vulnerable users may experience compulsive patterns of interaction, similar to other behavioral addictions.

Researchers have found “teens often begin using AI chatbots for comfort or entertainment, but many gradually become more attached and reliant. Their experiences reflect features of behavioral addiction, with consequences that include disrupted sleep, academic struggles, and strained relationships.”

The solution isn’t necessarily to ban the technology. It means seeing these emotional technologies for what they are—tools with addictive potential—rather than treating them as innocent toys.

Let’s create digital seatbelts

When it comes to AI companions, we must move cautiously. Until safety is proven, under-18 access should be limited.

Just as we design safe playgrounds, we need child-safe design online; build in time-outs, remove inappropriate content and ensure quick escalation to human help if distress is detected.

Transparency matters too. Young people and their parents deserve to know how conversations are stored, who moderates them and what happens when something goes wrong.

Companies must be accountable for well-being, not just engagement.

We also need to teach digital literacy and emotional resilience to help teens understand that a chatbot’s comfort is simulated, not reality.

And above all, we must strengthen real-world mental health supports. Many young people turn to bots because the human system feels out of reach.

What kind of digital future do we want?

Every generation faces its own version of the genie in the bottle. For ours, it’s artificial intimacy.

Do we let our children form bonds with machines designed to mimic emotion? Or do we help them engage in the messy, human relationships that teach empathy, patience and love?

The under-18 ban by one of the most popular AI companion companies is a welcome start, but unless we follow through with clear standards, education and accountability, the same story will simply reappear under another name.

Technology may move faster than policy, but we still have a choice about the kind of community we create for the next generation.

Let’s make sure children learn their earliest lessons in empathy, friendship and love from people, not software.

This article was first published on Pursuit. Read the original article here.

Provided by University of Melbourne

This story was originally published on Tech Xplore.